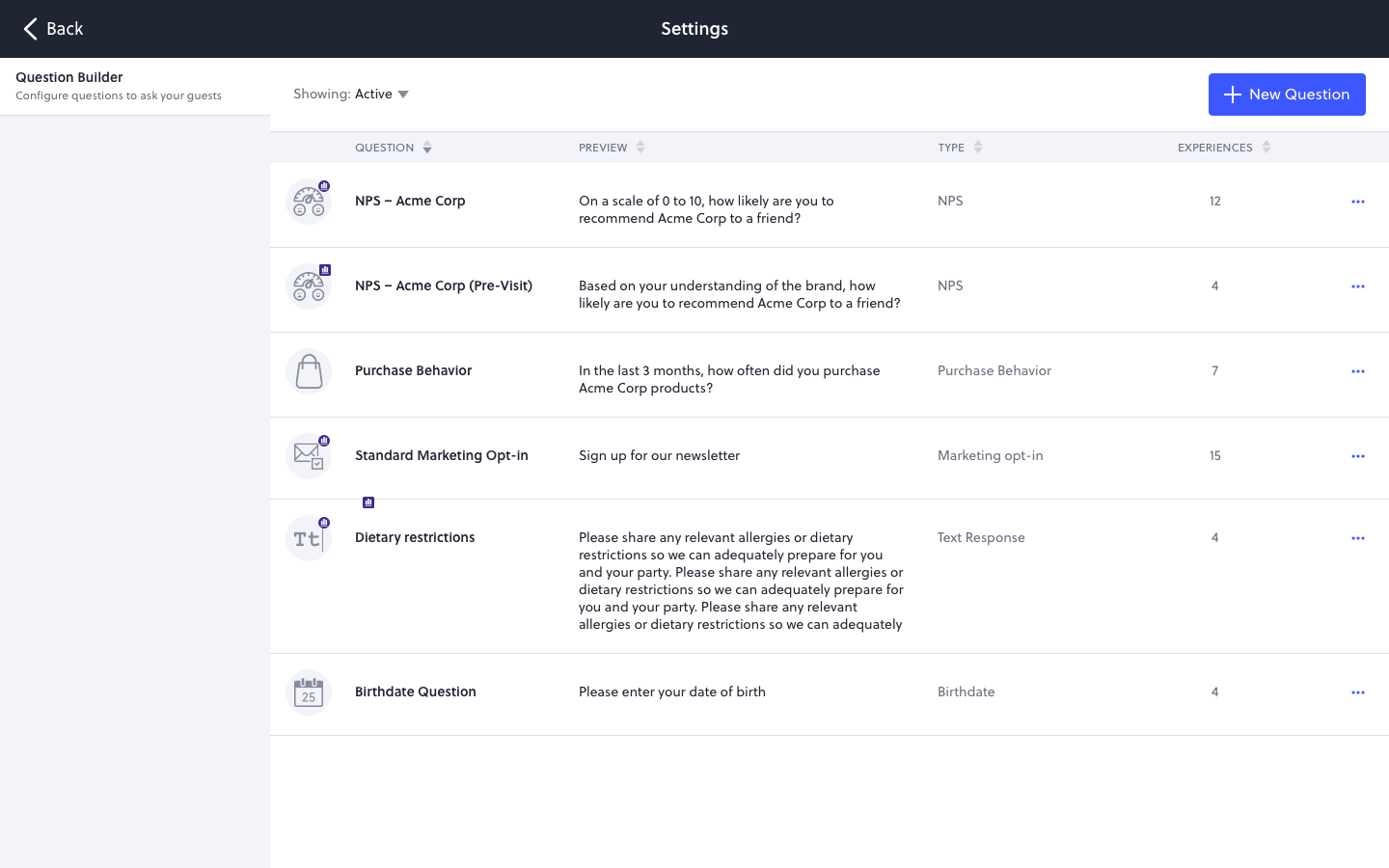

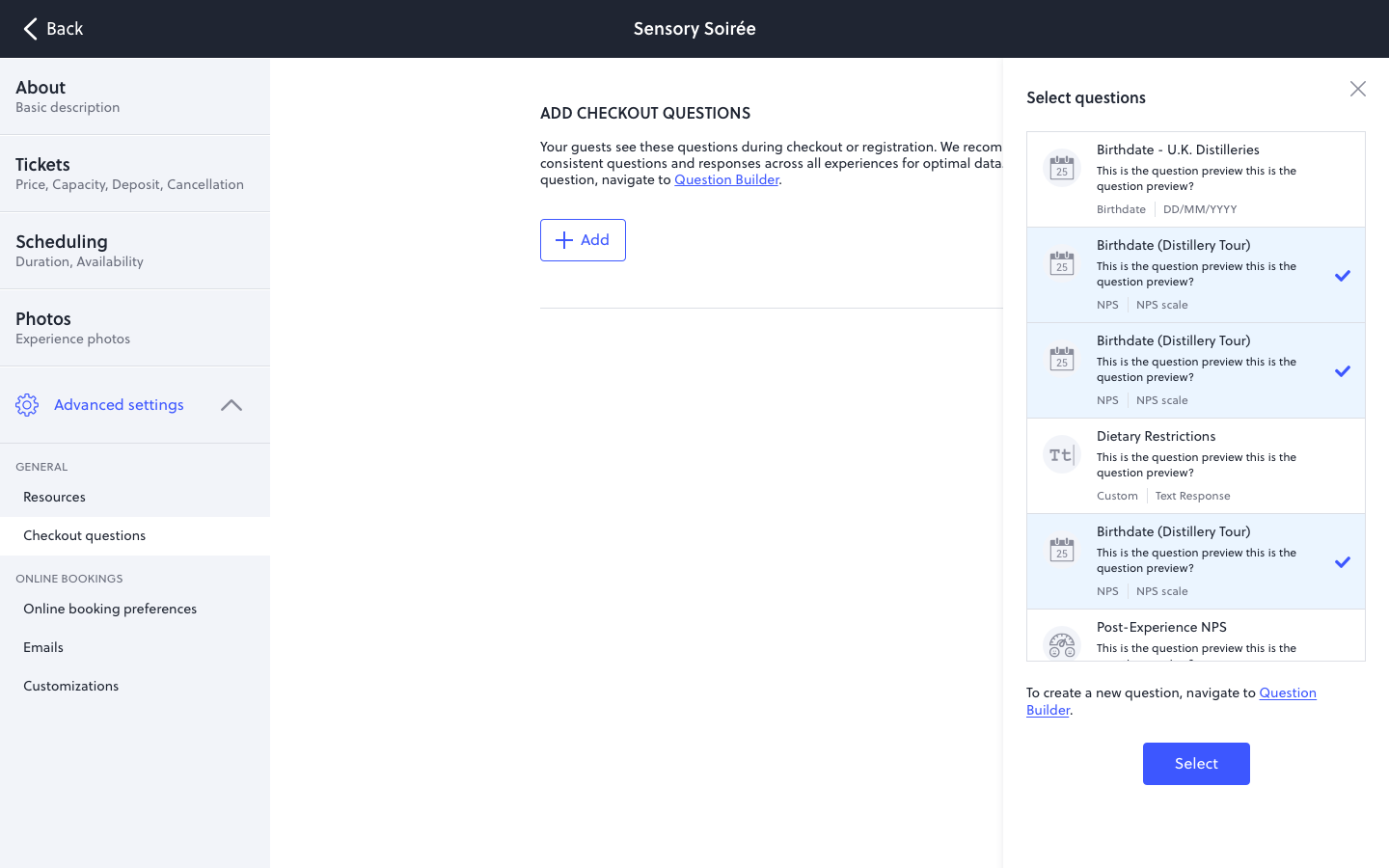

Question Builder: a question library for the survey-authoring operator.

Treat questions as first-class objects in a library.

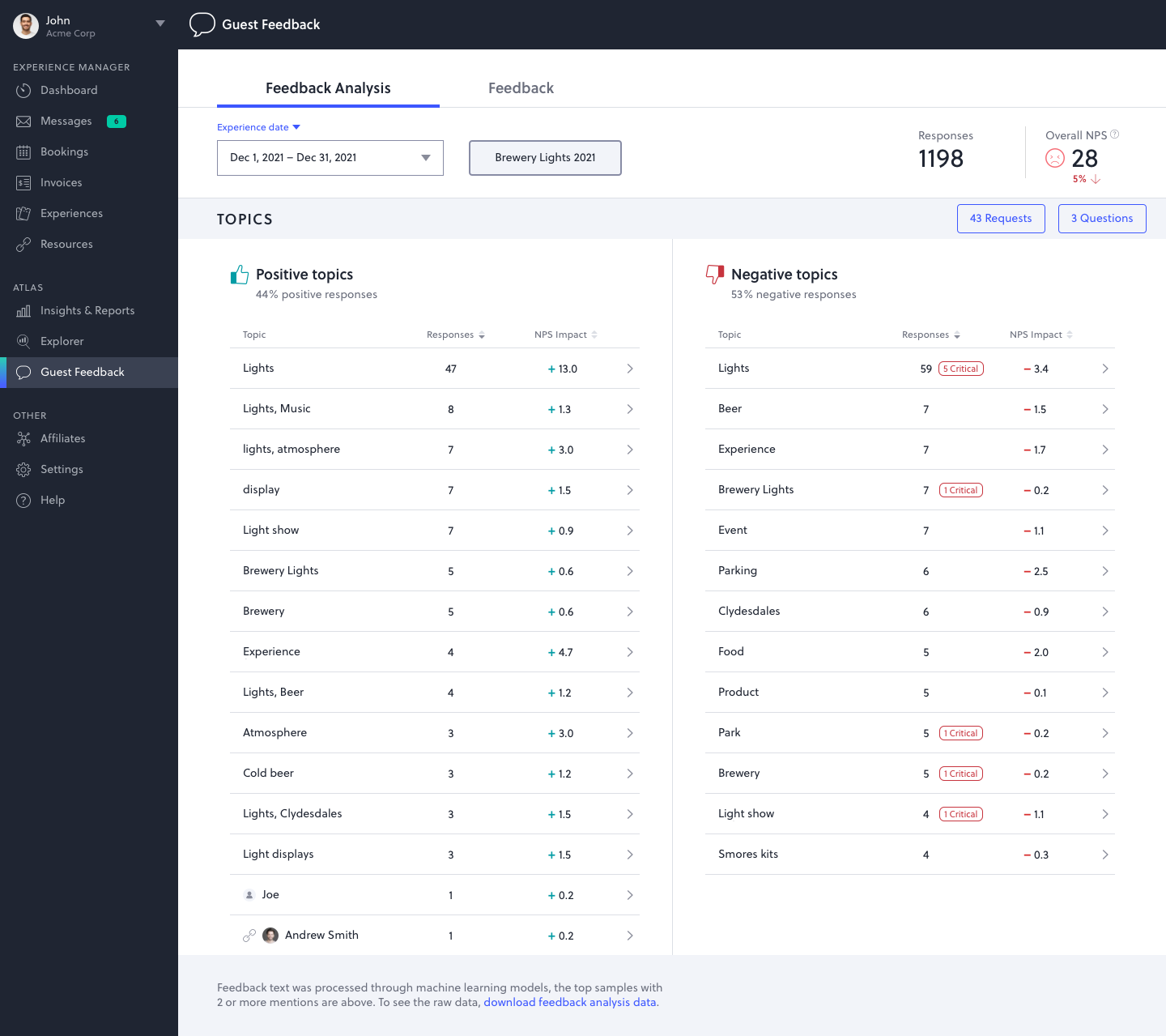

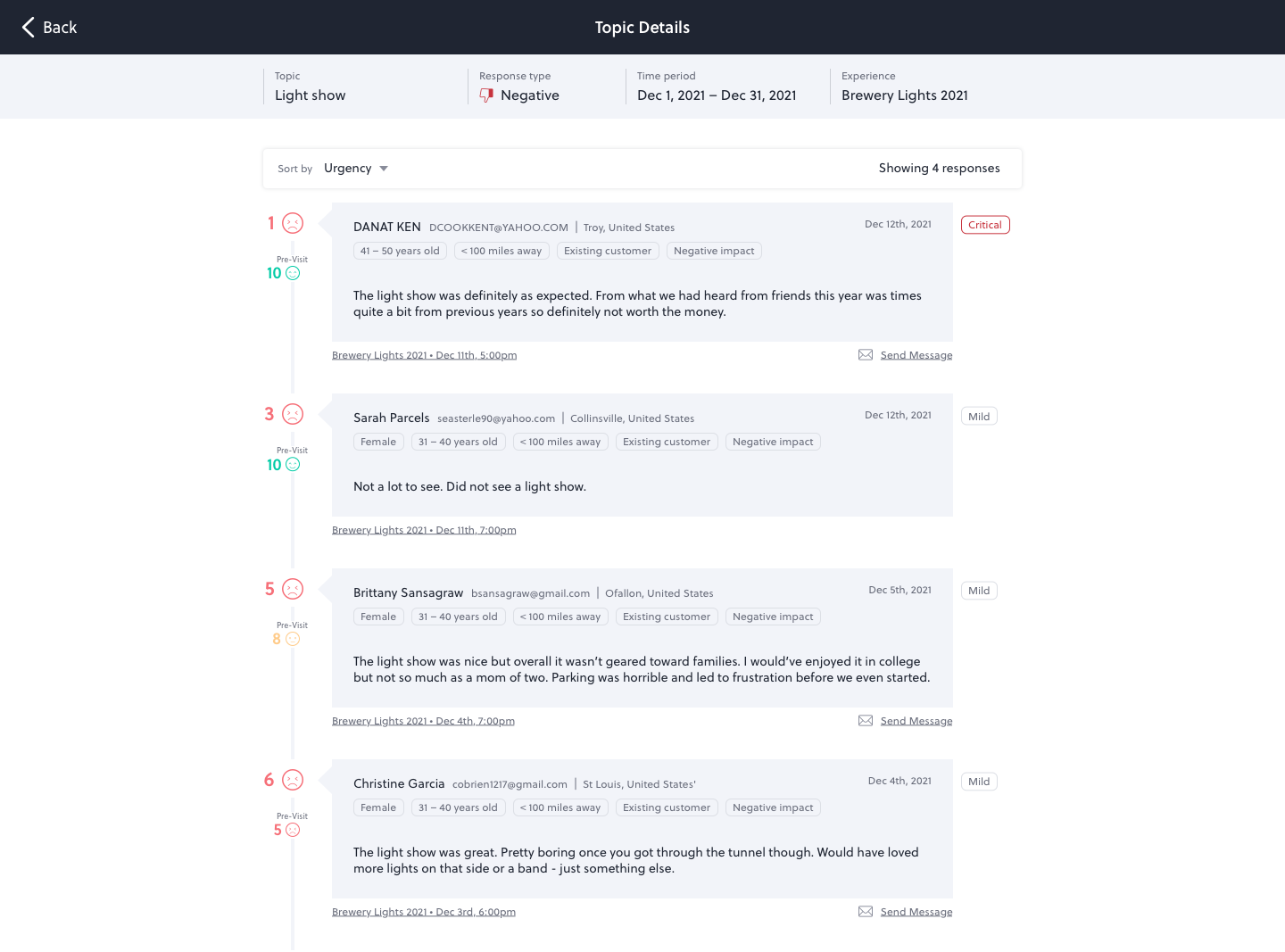

A brand-marketing director who needs to know what their guests actually think isn't a survey designer. They have a meeting in 20 minutes and they need to ship a question that returns useful data. Question Builder is the surface that gets them there.

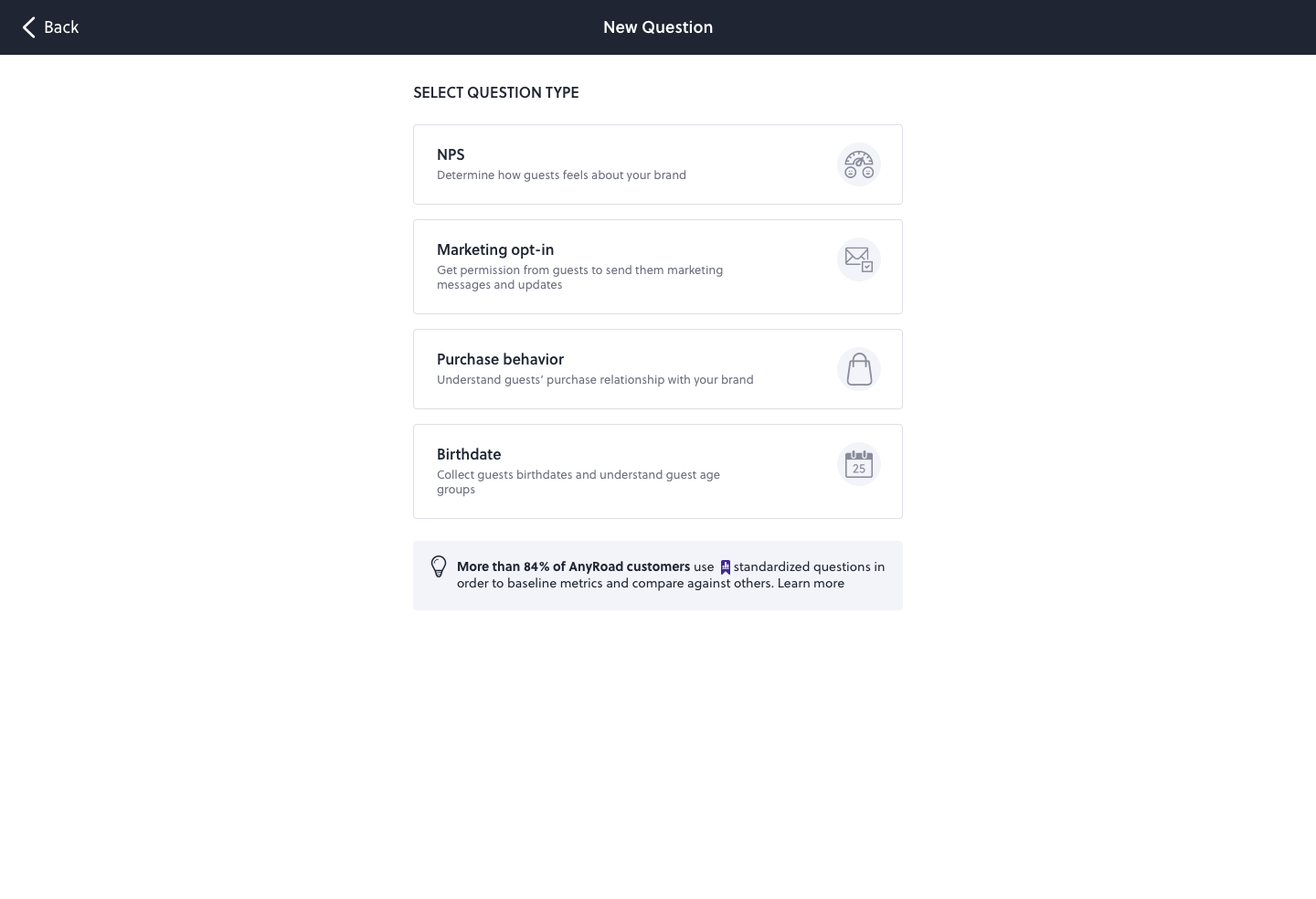

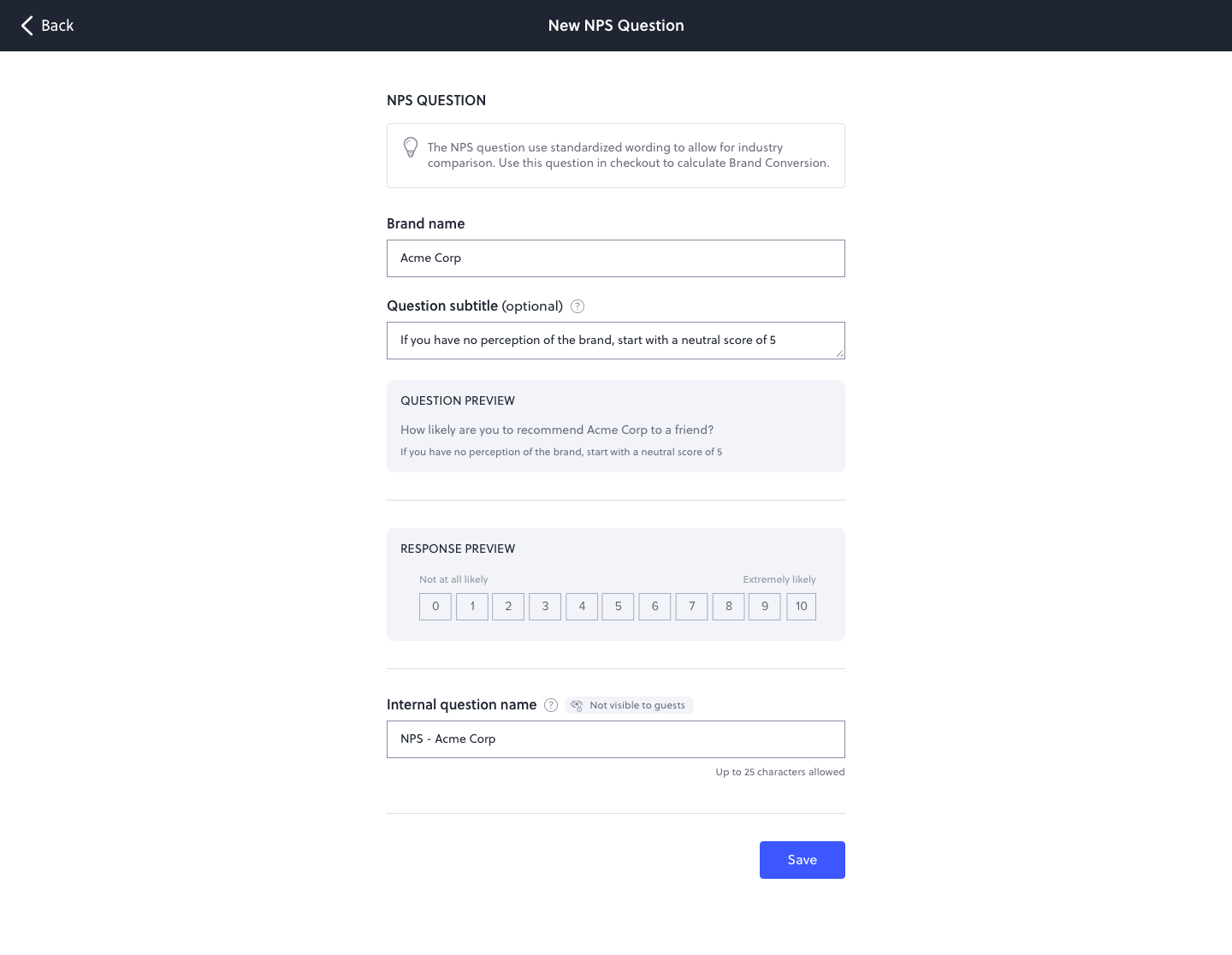

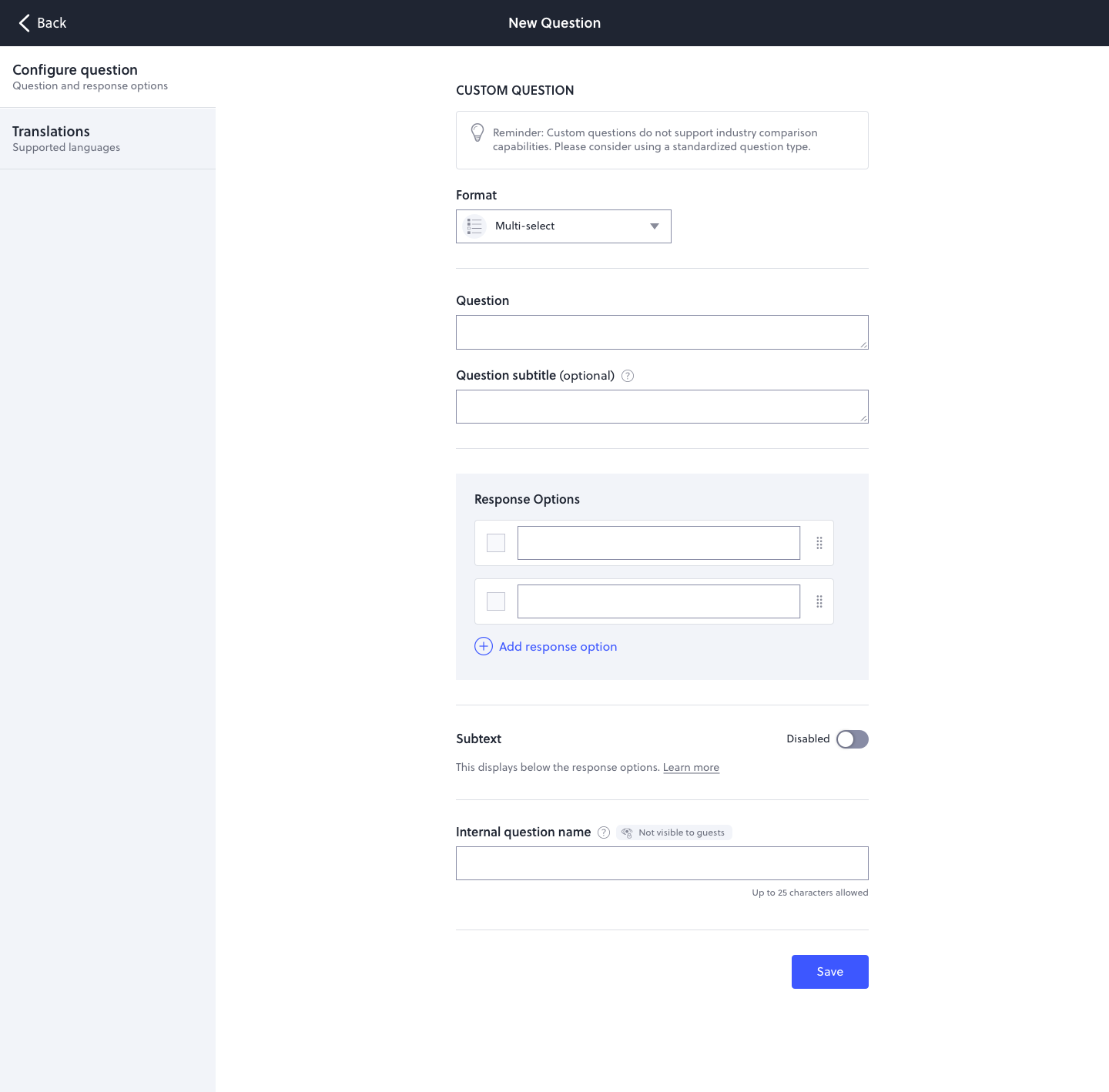

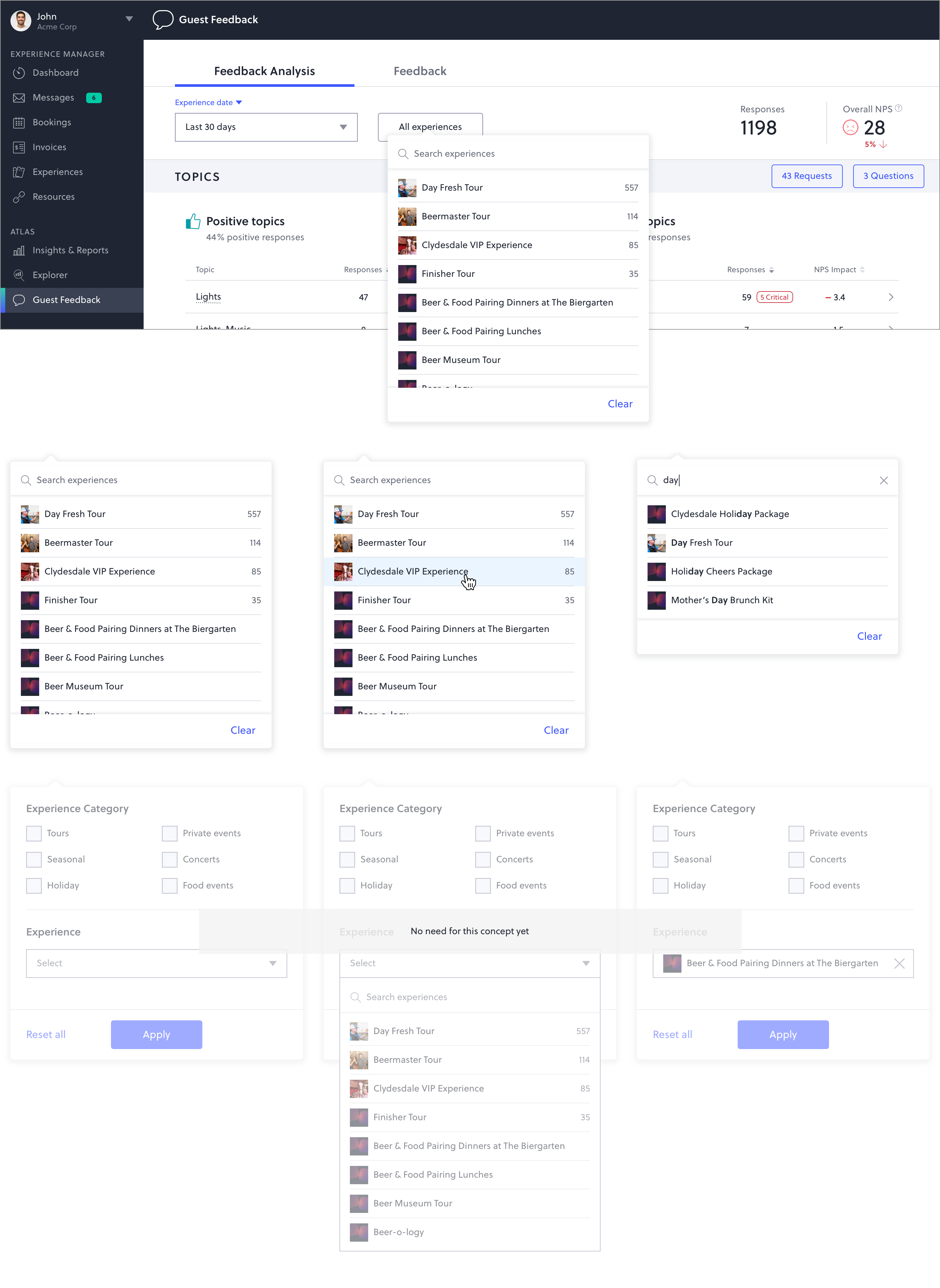

The product treats questions as first-class objects in a library. Operators build a question once, name it, configure it, translate it, and reuse it across every experience the brand runs. Standardized question types (NPS, Marketing Opt-in, Purchase Behavior, Birthdate) come with industry-validated wording so the data they collect is comparable to peer benchmarks downstream. Custom questions cover everything else, with response formats sized to what the operator is actually asking.

The latest enhancement adds conditional logic on top of the library: sub-questions branching off a parent answer with an AND/OR composer for compound rules.

- Move 01Library as the home. Every question authored is a saved entity with a name, type, preview, and the count of experiences using it. Authoring becomes inventory, not one-off work.

- Move 02Standardized types unlock benchmarks. NPS, Marketing Opt-in, Purchase Behavior, and Birthdate ship with industry wording. 84% of AnyRoad customers use them by default, which is what makes cross-customer comparison possible in Industry Benchmarks downstream.

- Move 03Custom for the long tail. Six response formats (Free Text, Multi-Select, Radio, Checkbox, Dropdown, Date Input) for everything the standardized library doesn't cover.

- Move 04Translations as a first-class concern. Spanish, Mandarin, and other languages live on the same screen as the source. When the source changes, an "Update Language Translations" notification fires automatically.

- Move 05Lifecycle without surprises. New, Rename, Duplicate, Archive, Restore. Destructive actions (deleting collected data) require explicit confirmation.

- Move 06Conditional logic as the latest layer. Sub-questions, conditions, and AND/OR composition for the authoring patterns that needed to grow up.